Increasing the size of your MFT

Create a large MFT on a new Windows NTFS volume.

Why would anyone want to increase the size of the Windows NTFS Master File Table (MFT)?

In my experience the Master File Table can become fragmented over time. Fragmentation can be caused by several circumstances ranging from how busy to the number of small files are stored on the volume. The built in Windows defragger can defragment the Master File Table, it will invariably get into difficulties when the volume is reaching capacity.

Preparing a Volume

To help mitigate the fragmentation of the MFT the simple answer is to cause the Master File Table to bloat on a freshly formatted volume. If you bloat the MFT large enough it should remain in a single lump on the hard drive for the lifetime of the volume. This should mean that overall, there will be less fragmentation of the Master File Table and a performance boost.

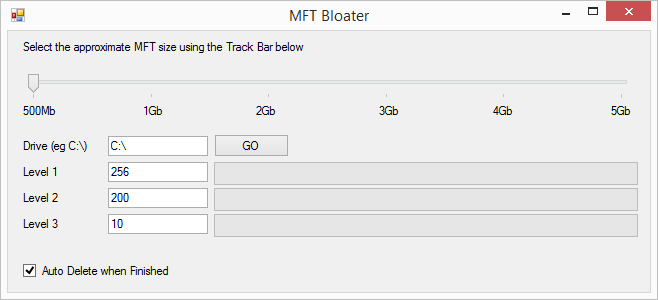

In my work, I have servers which hold millions of tiny files. I needed to have a large Master File Table from the outset to prevent future problems. I’ve created a free .NET application that will create a hierarchy of nested folders on a drive and delete them again when finished. Creating folders seems is the easiest method of artificially causing the Master File Table to grow. Each folder seems to create a 1024kb entry (1GB of MFT = 1,024,000 folders or files). You can check the size of your MFT by using the Sysinternals Tool – NTFSINFO.exe available from http://live.sysinternals.com/NTFSINFO.EXE.

The application is pre-configured, and you can also change the number of levels before you click the Go button. As a single threaded application, it may take some time to do its stuff. It may appear as if the application has crashed and will max out a single core of your CPU. Once the application has finished, defrag the volume so that the MFT is organised towards the front of the disk. Ultra-defrag available on Source Forge has an “Optimise MFT” action that will consolidate the MFT.